Kubernetesv1.10.1集群部署

浏览量:1521

一、环境准备

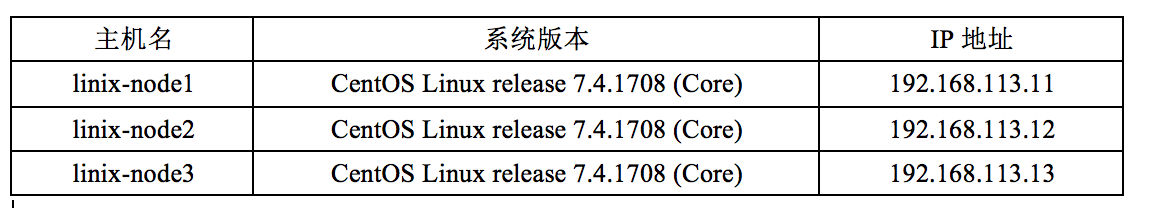

1.1 系统规划

1.2 软件版本规划

1.3 hosts解析

192.168.113.11 linux-node1 192.168.113.12 linux-node2 192.168.113.13 linux-node3

1.4 设置免密认证

[root@linux-node1 ~]# ssh-keygen -t rsa [root@linux-node1 ~]# ssh-copy-id linux-node1 [root@linux-node1 ~]# ssh-copy-id linux-node2 [root@linux-node1 ~]# ssh-copy-id linux-node3

1.5 创建目录

mkdir -p /opt/kubernetes/{cfg,bin,ssl,log}1.6 设置环境变量

PATH=$PATH:$HOME/bin:/opt/kubernetes/bin

二、docker安装

2.1. 设置源

[root@linux-node1 ~]# cd /etc/yum.repos.d/ [root@linux-node1 yum.repos.d]# wget \ https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

2.2 docker安装

yum install -y docker-ce

2.3 启动docker

systemctl start docker

三、CA证书制作

3.1 安装CFSSL命令

cd /usr/local/src wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 chmod +x cfssl* mv cfssl-certinfo_linux-amd64 /opt/kubernetes/bin/cfssl-certinfo mv cfssljson_linux-amd64 /opt/kubernetes/bin/cfssljson mv cfssl_linux-amd64 /opt/kubernetes/bin/cfssl 复制cfssl命令文件到node1和node2节点。如果实际中多个节点,就都需要同步复制。 scp /opt/kubernetes/bin/cfssl* 192.168.113.12:/opt/kubernetes/bin scp /opt/kubernetes/bin/cfssl* 192.168.113.13:/opt/kubernetes/bin

3.2 创建证书

mkdir -p /usr/local/src/ssl/

cd /usr/local/src/ssl/

cat <<EOF >ca-config.json

{

"signing": {

"default": {

"expiry": "8760h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "8760h"

}

}

}

}

EOF

cat <<EOF > ca-csr.json

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -initca ca-csr.json | cfssljson -bare ca3.3 分发证书到节点

scp ca.csr ca.pem ca-key.pem ca-config.json 192.168.113.11:/opt/kubernetes/ssl scp ca.csr ca.pem ca-key.pem ca-config.json 192.168.113.12:/opt/kubernetes/ssl scp ca.csr ca.pem ca-key.pem ca-config.json 192.168.113.13:/opt/kubernetes/ssl

四、etcd部署

4.1 解压软件包

cd /usr/local/src tar zxf kubernetes.tar.gz tar zxf kubernetes-server-linux-amd64.tar.gz tar zxf kubernetes-client-linux-amd64.tar.gz tar zxf kubernetes-node-linux-amd64.tar.gz tar zxf etcd-v3.2.18-linux-amd64.tar.gz cd /usr/local/src/etcd-v3.2.18-linux-amd64

4.2 分发命令

scp etcd etcdctl 192.168.113.11:/opt/kubernetes/bin/ scp etcd etcdctl 192.168.113.12:/opt/kubernetes/bin/ scp etcd etcdctl 192.168.113.13:/opt/kubernetes/bin/

4.2 创建证书

cd /usr/local/src/ssl/

cat <<EOF > etcd-csr.json

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"192.168.113.11",

"192.168.113.12",

"192.168.113.13"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=./ca.pem \

-ca-key=./ca-key.pem \

-config=./ca-config.json \

-profile=kubernetes etcd-csr.json | cfssljson -bare etcd4.3 分发证书

scp etcd*.pem 192.168.113.11:/opt/kubernetes/ssl scp etcd*.pem 192.168.113.12:/opt/kubernetes/ssl scp etcd*.pem 192.168.113.13:/opt/kubernetes/ssl

4.4 设置etcd配置

$master cat <<EOF >/opt/kubernetes/cfg/etcd.conf #[Member] ETCD_NAME="etcd01" ETCD_DATA_DIR="/var/lib/etcd/default.etcd" ETCD_LISTEN_PEER_URLS="https://192.168.113.11:2380" ETCD_LISTEN_CLIENT_URLS="https://192.168.113.11:2379" #[Clustering] ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.113.11:2380" ETCD_ADVERTISE_CLIENT_URLS="https://192.168.113.11:2379" ETCD_INITIAL_CLUSTER="etcd01=https://192.168.113.11:2380,etcd02=https://192.168.113.12:2380,etcd03=https://192.168.113.13:2380" ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster" ETCD_INITIAL_CLUSTER_STATE="new" EOF $node1 cat <<EOF >/opt/kubernetes/cfg/etcd.conf #[Member] ETCD_NAME="etcd02" ETCD_DATA_DIR="/var/lib/etcd/default.etcd" ETCD_LISTEN_PEER_URLS="https://192.168.113.12:2380" ETCD_LISTEN_CLIENT_URLS="https://192.168.113.12:2379" #[Clustering] ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.113.12:2380" ETCD_ADVERTISE_CLIENT_URLS="https://192.168.113.12:2379" ETCD_INITIAL_CLUSTER="etcd01=https://192.168.113.11:2380,etcd02=https://192.168.113.12:2380,etcd03=https://192.168.113.13:2380" ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster" ETCD_INITIAL_CLUSTER_STATE="new" EOF $node2 cat <<EOF >/opt/kubernetes/cfg/etcd.conf #[Member] ETCD_NAME="etcd03" ETCD_DATA_DIR="/var/lib/etcd/default.etcd" ETCD_LISTEN_PEER_URLS="https://192.168.113.13:2380" ETCD_LISTEN_CLIENT_URLS="https://192.168.113.13:2379" #[Clustering] ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.113.13:2380" ETCD_ADVERTISE_CLIENT_URLS="https://192.168.113.13:2379" ETCD_INITIAL_CLUSTER="etcd01=https://192.168.113.11:2380,etcd02=https://192.168.113.12:2380,etcd03=https://192.168.113.13:2380" ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster" ETCD_INITIAL_CLUSTER_STATE="new" EOF

4.5 创建ETCD服务

#vim /usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=-/opt/kubernetes/cfg/etcd.conf

ExecStart=/opt/kubernetes/bin/etcd \

--name=${ETCD_NAME} \

--data-dir=${ETCD_DATA_DIR} \

--listen-peer-urls=${ETCD_LISTEN_PEER_URLS} \

--listen-client-urls=${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \

--advertise-client-urls=${ETCD_ADVERTISE_CLIENT_URLS} \

--initial-advertise-peer-urls=${ETCD_INITIAL_ADVERTISE_PEER_URLS} \

--initial-cluster=${ETCD_INITIAL_CLUSTER} \

--initial-cluster-token=${ETCD_INITIAL_CLUSTER} \

--initial-cluster-state=new \

--cert-file=/opt/kubernetes/ssl/etcd.pem \

--key-file=/opt/kubernetes/ssl/etcd-key.pem \

--peer-cert-file=/opt/kubernetes/ssl/etcd.pem \

--peer-key-file=/opt/kubernetes/ssl/etcd-key.pem \

--trusted-ca-file=/opt/kubernetes/ssl/ca.pem \

--peer-trusted-ca-file=/opt/kubernetes/ssl/ca.pem

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target4.6 分发

scp /usr/lib/systemd/system/etcd.service 192.168.113.12:/usr/lib/systemd/system/ scp /usr/lib/systemd/system/etcd.service 192.168.113.13:/usr/lib/systemd/system/

4.6 创建存储目录并启动服务

mkdir /var/lib/etcd systemctl daemon-reload systemctl enable etcd systemctl start etcd

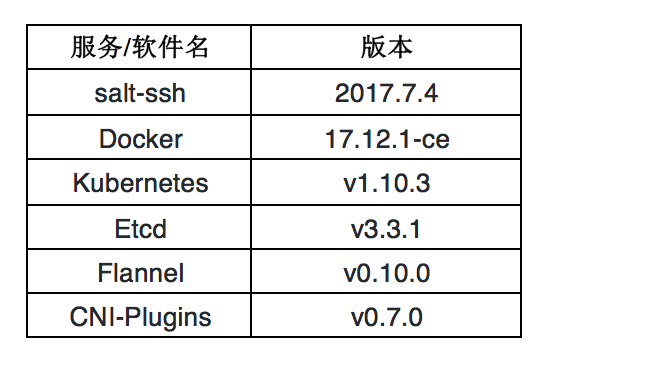

4.7 检查集群健康状态

etcdctl --endpoints=https://192.168.113.11:2379 \ --ca-file=/opt/kubernetes/ssl/ca.pem \ --cert-file=/opt/kubernetes/ssl/etcd.pem \ --key-file=/opt/kubernetes/ssl/etcd-key.pem cluster-health

五、Master部署

5.1 相关二进制软件包拷贝

cd /usr/local/src/kubernetes cp server/bin/kube-apiserver /opt/kubernetes/bin/ cp server/bin/kube-controller-manager /opt/kubernetes/bin/ cp server/bin/kube-scheduler /opt/kubernetes/bin/ cd /usr/local/src/ssl

5.1 证书制作

cat <<EOF >kubernetes-csr.json

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"192.168.113.11",

"10.1.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=/opt/kubernetes/ssl/ca.pem \

-ca-key=/opt/kubernetes/ssl/ca-key.pem \

-config=/opt/kubernetes/ssl/ca-config.json \

-profile=kubernetes kubernetes-csr.json | cfssljson -bare kubernetes5.2 拷贝证书

scp kubernetes*.pem 192.168.113.11:/opt/kubernetes/ssl/ scp kubernetes*.pem 192.168.113.12:/opt/kubernetes/ssl/ scp kubernetes*.pem 192.168.113.13:/opt/kubernetes/ssl/

5.3 创建token文件

$ head -c 16 /dev/urandom | od -An -t x | tr -d ' ' 75af759982f66e18db698d54c33a927 $ cat /opt/kubernetes/ssl/bootstrap-token.csv 75af759982f66e18db698d54c33a927,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

5.4 创建基础用户名和密码认证

cat <<EOF >/opt/kubernetes/ssl/basic-auth.csv admin,admin,1 readonly,readonly,2 EOF

5.5 部署Kubernetes API Server

vim /usr/lib/systemd/system/kube-apiserver.service [Unit] Description=Kubernetes API Server Documentation=https://github.com/GoogleCloudPlatform/kubernetes After=network.target [Service] ExecStart=/opt/kubernetes/bin/kube-apiserver \ --admission-control=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota,NodeRestriction \ --bind-address=192.168.113.11 \ --insecure-bind-address=127.0.0.1 \ --authorization-mode=Node,RBAC \ --runtime-config=rbac.authorization.k8s.io/v1 \ --kubelet-https=true \ --anonymous-auth=false \ --basic-auth-file=/opt/kubernetes/ssl/basic-auth.csv \ --enable-bootstrap-token-auth \ --token-auth-file=/opt/kubernetes/ssl/bootstrap-token.csv \ --service-cluster-ip-range=10.1.0.0/16 \ --service-node-port-range=20000-40000 \ --tls-cert-file=/opt/kubernetes/ssl/kubernetes.pem \ --tls-private-key-file=/opt/kubernetes/ssl/kubernetes-key.pem \ --client-ca-file=/opt/kubernetes/ssl/ca.pem \ --service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \ --etcd-cafile=/opt/kubernetes/ssl/ca.pem \ --etcd-certfile=/opt/kubernetes/ssl/kubernetes.pem \ --etcd-keyfile=/opt/kubernetes/ssl/kubernetes-key.pem \ --etcd-servers=https://192.168.113.11:2379,https://192.168.113.12:2379,https://192.168.113.13:2379 \ --enable-swagger-ui=true \ --allow-privileged=true \ --audit-log-maxage=30 \ --audit-log-maxbackup=3 \ --audit-log-maxsize=100 \ --audit-log-path=/opt/kubernetes/log/api-audit.log \ --event-ttl=1h \ --v=2 \ --logtostderr=false \ --log-dir=/opt/kubernetes/log Restart=on-failure RestartSec=5 Type=notify LimitNOFILE=65536 [Install] WantedBy=multi-user.target

5.6 启动api-server

systemctl daemon-reload systemctl enable kube-apiserver systemctl start kube-apiserver systemctl status kube-apiserver

5.7 部署 Controller Manager服务

$ vim /usr/lib/systemd/system/kube-controller-manager.service [Unit] Description=Kubernetes Controller Manager Documentation=https://github.com/GoogleCloudPlatform/kubernetes [Service] ExecStart=/opt/kubernetes/bin/kube-controller-manager \ --address=127.0.0.1 \ --master=http://127.0.0.1:8080 \ --allocate-node-cidrs=true \ --service-cluster-ip-range=10.1.0.0/16 \ --cluster-cidr=10.2.0.0/16 \ --cluster-name=kubernetes \ --cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \ --cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \ --service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \ --root-ca-file=/opt/kubernetes/ssl/ca.pem \ --leader-elect=true \ --v=2 \ --logtostderr=false \ --log-dir=/opt/kubernetes/log Restart=on-failure RestartSec=5 [Install] WantedBy=multi-user.target

5.8 启动Controller Manager

systemctl daemon-reload systemctl enable kube-controller-manager systemctl start kube-controller-manager

5.9 部署 Kubernetes Scheduler

$ vim /usr/lib/systemd/system/kube-scheduler.service [Unit] Description=Kubernetes Scheduler Documentation=https://github.com/GoogleCloudPlatform/kubernetes [Service] ExecStart=/opt/kubernetes/bin/kube-scheduler \ --address=127.0.0.1 \ --master=http://127.0.0.1:8080 \ --leader-elect=true \ --v=2 \ --logtostderr=false \ --log-dir=/opt/kubernetes/log Restart=on-failure RestartSec=5 [Install] WantedBy=multi-user.target

5.10 启动Scheduler

systemctl daemon-reload systemctl enable kube-scheduler systemctl start kube-scheduler systemctl status kube-scheduler

六、部署Kubectl 命令工具

6.1 拷贝命令

cd /usr/local/src/kubernetes/client/bin cp kubectl /opt/kubernetes/bin/ cd /usr/local/src/ssl/

6.2 创建admin证书签名

cat <<EOF > admin-csr.json

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=./ca.pem \

-ca-key=./ca-key.pem \

-config=./ca-config.json \

-profile=kubernetes admin-csr.json | cfssljson -bare admin6.3 拷贝证书

mv admin*.pem /opt/kubernetes/ssl/

6.4 设置集群相关参数

kubectl config set-cluster kubernetes \ --certificate-authority=/opt/kubernetes/ssl/ca.pem \ --embed-certs=true \ --server=https://192.168.113.11:6443 kubectl config set-credentials admin \ --client-certificate=/opt/kubernetes/ssl/admin.pem \ --embed-certs=true \ --client-key=/opt/kubernetes/ssl/admin-key.pem kubectl config set-context kubernetes \ --cluster=kubernetes \ --user=admin kubectl config use-context kubernetes

6.5 使用kubectl检查集群状态

[root@linux-node1 ssl]# kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-2 Healthy {"health": "true"}

etcd-1 Healthy {"health": "true"}

etcd-0 Healthy {"health": "true"}七、Node节点部署

7.1 部署kubelet

cd /usr/local/src/kubernetes/server/bin/ cp kubelet kube-proxy /opt/kubernetes/bin/ scp kubelet kube-proxy 192.168.113.12:/opt/kubernetes/bin/ scp kubelet kube-proxy 192.168.113.13:/opt/kubernetes/bin/ cd /usr/local/src/ssl

7.2 创建绑定角色

kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

7.3 设置相关认证参数

#创建 kubelet bootstrapping kubeconfig 文件 设置集群参数 kubectl config set-cluster kubernetes \ --certificate-authority=/opt/kubernetes/ssl/ca.pem \ --embed-certs=true \ --server=https://192.168.113.11:6443 \ --kubeconfig=bootstrap.kubeconfig #设置客户端认证参数 $ cat /opt/kubernetes/ssl/bootstrap-token.csv 75af759982f66e18db698d54c33a927,kubelet-bootstrap,10001,"system:kubelet-bootstrap" kubectl config set-credentials kubelet-bootstrap \ --token=75af759982f66e18db698d54c33a927 \ --kubeconfig=bootstrap.kubeconfig #设置上下文 kubectl config set-context default \ --cluster=kubernetes \ --user=kubelet-bootstrap \ --kubeconfig=bootstrap.kubeconfig #选择默认上下文 kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

7.4 拷贝证书到节点

scp bootstrap.kubeconfig 192.168.113.11:/opt/kubernetes/cfg scp bootstrap.kubeconfig 192.168.113.12:/opt/kubernetes/cfg scp bootstrap.kubeconfig 192.168.113.13:/opt/kubernetes/cfg

⚠️注意:以后每次新增加节点需要将此文件拷贝到节点

7.5 设置CNI支持(所有主机)

mkdir -p /etc/cni/net.d

cat <<EOF >/etc/cni/net.d/10-default.conf

{

"name": "flannel",

"type": "flannel",

"delegate": {

"bridge": "docker0",

"isDefaultGateway": true,

"mtu": 1400

}

}

EOF7.6 创建kubelet目录(所有主机)

mkdir /var/lib/kubelet

7.6 创建kubelet服务

$ vim /usr/lib/systemd/system/kubelet.service [Unit] Description=Kubernetes Kubelet Documentation=https://github.com/GoogleCloudPlatform/kubernetes After=docker.service Requires=docker.service [Service] WorkingDirectory=/var/lib/kubelet ExecStart=/opt/kubernetes/bin/kubelet \ --address=192.168.113.11 \ --hostname-override=192.168.113.11 \ --pod-infra-container-image=mirrorgooglecontainers/pause-amd64:3.0 \ --experimental-bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \ --kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \ --cert-dir=/opt/kubernetes/ssl \ --network-plugin=cni \ --cni-conf-dir=/etc/cni/net.d \ --cni-bin-dir=/opt/kubernetes/bin/cni \ --cluster-dns=10.1.0.2 \ --cluster-domain=cluster.local. \ --hairpin-mode hairpin-veth \ --allow-privileged=true \ --fail-swap-on=false \ --logtostderr=true \ --v=2 \ --logtostderr=false \ --log-dir=/opt/kubernetes/log Restart=on-failure RestartSec=5

7.7 拷贝服务到节点

scp /usr/lib/systemd/system/kubelet.service 192.168.113.12:/usr/lib/systemd/system/ scp /usr/lib/systemd/system/kubelet.service 192.168.113.13:/usr/lib/systemd/system/

注意:需要修改节点IP

7.7 启动服务(节点启动即可)

systemctl daemon-reload systemctl enable kubelet systemctl start kubelet

7.8 Server端查看csr强求

[root@linux-node1 ssl]# kubectl get csr NAME AGE REQUESTOR CONDITION node-csr-B6rCRxM_l9oKIPJGAitaHS3XX13aq_bQadBdS_NBacA 3m kubelet-bootstrap Pending node-csr-muQH7qofTiK1d_DsT6Oqa9ieNNkIIyNn4M7xwjIM66c 1m kubelet-bootstrap Pending

7.9 批准kubelet 的 TLS 证书请求

kubectl get csr|grep 'Pending' | awk 'NR>0{print $1}'| xargs kubectl certificate approve

[root@linux-node1 ssl]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-B6rCRxM_l9oKIPJGAitaHS3XX13aq_bQadBdS_NBacA 4m kubelet-bootstrap Approved,Issued

node-csr-muQH7qofTiK1d_DsT6Oqa9ieNNkIIyNn4M7xwjIM66c 1m kubelet-bootstrap Approved,Issued

[root@linux-node1 bin]# kubectl get node

NAME STATUS ROLES AGE VERSION

192.168.113.12 NotReady <none> 3s v1.10.1

192.168.113.13 NotReady <none> 3s v1.10.17.9 节点下载pause镜像

docker pull mirrorgooglecontainers/pause-amd64:3.0

7.10 重启kubelet

systemctl restart kubelet

八、部署kube-proxy

8.1 配置kube-proxy lvs

yum install -y ipvsadm ipset conntrack cd /usr/local/src/ssl/

8.2 配置证书

cat <<EOF >kube-proxy-csr.json

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=./ca.pem \

-ca-key=./ca-key.pem \

-config=./ca-config.json \

-profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy8.3 分发证书到节点

scp kube-proxy*.pem 192.168.113.11:/opt/kubernetes/ssl/ scp kube-proxy*.pem 192.168.113.12:/opt/kubernetes/ssl/ scp kube-proxy*.pem 192.168.113.13:/opt/kubernetes/ssl/

8.4 创建proxy配置文件

kubectl config set-cluster kubernetes \ --certificate-authority=/opt/kubernetes/ssl/ca.pem \ --embed-certs=true \ --server=https://192.168.113.11:6443 \ --kubeconfig=kube-proxy.kubeconfig kubectl config set-credentials kube-proxy \ --client-certificate=/opt/kubernetes/ssl/kube-proxy.pem \ --client-key=/opt/kubernetes/ssl/kube-proxy-key.pem \ --embed-certs=true \ --kubeconfig=kube-proxy.kubeconfig kubectl config set-context default \ --cluster=kubernetes \ --user=kube-proxy \ --kubeconfig=kube-proxy.kubeconfig kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

8.5 分发配置文件

scp kube-proxy.kubeconfig 192.168.113.11:/opt/kubernetes/cfg/ scp kube-proxy.kubeconfig 192.168.113.12:/opt/kubernetes/cfg/ scp kube-proxy.kubeconfig 192.168.113.13:/opt/kubernetes/cfg/

8.6 创建服务配置

vim /usr/lib/systemd/system/kube-proxy.service [Unit] Description=Kubernetes Kube-Proxy Server Documentation=https://github.com/GoogleCloudPlatform/kubernetes After=network.target [Service] WorkingDirectory=/var/lib/kube-proxy ExecStart=/opt/kubernetes/bin/kube-proxy \ --bind-address=192.168.113.11 \ --hostname-override=192.168.113.11 \ --kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig \ --masquerade-all \ --feature-gates=SupportIPVSProxyMode=true \ --proxy-mode=ipvs \ --ipvs-min-sync-period=5s \ --ipvs-sync-period=5s \ --ipvs-scheduler=rr \ --logtostderr=true \ --v=2 \ --logtostderr=false \ --log-dir=/opt/kubernetes/log Restart=on-failure RestartSec=5 LimitNOFILE=65536 [Install] WantedBy=multi-user.target

注意:需要修改对应IP

8.7 启动服务(节点)

mkdir /var/lib/kube-proxy -p systemctl daemon-reload systemctl enable kube-proxy systemctl start kube-proxy

九、部署flannel CNI集成

9.1 软件包

tar xf flannel-v0.10.0-linux-amd64.tar.gz scp flanneld mk-docker-opts.sh 192.168.113.11:/opt/kubernetes/bin/ scp flanneld mk-docker-opts.sh 192.168.113.12:/opt/kubernetes/bin/ scp flanneld mk-docker-opts.sh 192.168.113.13:/opt/kubernetes/bin/ cd /usr/local/src/kubernetes/cluster/centos/node/bin/ scp remove-docker0.sh 192.168.113.11:/opt/kubernetes/bin/ scp remove-docker0.sh 192.168.113.12:/opt/kubernetes/bin/ scp remove-docker0.sh 192.168.113.13:/opt/kubernetes/bin/

9.2 创建证书

cd /usr/local/src/ssl

cat <<EOF >flanneld-csr.json

{

"CN": "flanneld",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=/opt/kubernetes/ssl/ca.pem \

-ca-key=/opt/kubernetes/ssl/ca-key.pem \

-config=/opt/kubernetes/ssl/ca-config.json \

-profile=kubernetes flanneld-csr.json | cfssljson -bare flanneld9.3 拷贝证书

scp flanneld*.pem 192.168.113.11:/opt/kubernetes/ssl/ scp flanneld*.pem 192.168.113.12:/opt/kubernetes/ssl/ scp flanneld*.pem 192.168.113.13:/opt/kubernetes/ssl/

9.2 配置flannel

vim /opt/kubernetes/cfg/flannel FLANNEL_ETCD="-etcd-endpoints=https://192.168.113.11:2379,https://192.168.113.12:2379,https://192.168.113.13:2379" FLANNEL_ETCD_KEY="-etcd-prefix=/kubernetes/network" FLANNEL_ETCD_CAFILE="--etcd-cafile=/opt/kubernetes/ssl/ca.pem" FLANNEL_ETCD_CERTFILE="--etcd-certfile=/opt/kubernetes/ssl/flanneld.pem" FLANNEL_ETCD_KEYFILE="--etcd-keyfile=/opt/kubernetes/ssl/flanneld-key.pem"

9.3 flannel服务

vim /usr/lib/systemd/system/flannel.service

[Unit]

Description=Flanneld overlay address etcd agent

After=network.target

Before=docker.service

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/flannel

ExecStartPre=/opt/kubernetes/bin/remove-docker0.sh

ExecStart=/opt/kubernetes/bin/flanneld ${FLANNEL_ETCD} ${FLANNEL_ETCD_KEY} ${FLANNEL_ETCD_CAFILE} ${FLANNEL_ETCD_CERTFILE} ${FLANNEL_ETCD_KEYFILE}

ExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -d /run/flannel/docker

Type=notify

[Install]

WantedBy=multi-user.target

RequiredBy=docker.service9.4拷贝

scp /opt/kubernetes/cfg/flannel 192.168.113.11:/opt/kubernetes/cfg/ scp /opt/kubernetes/cfg/flannel 192.168.113.12:/opt/kubernetes/cfg/ scp /opt/kubernetes/cfg/flannel 192.168.113.13:/opt/kubernetes/cfg/ scp /usr/lib/systemd/system/flannel.service 192.168.113.12:/usr/lib/systemd/system/ scp /usr/lib/systemd/system/flannel.service 192.168.113.12:/usr/lib/systemd/system/

9.5 CNI

mkdir -p /opt/kubernetes/bin/cni cd /usr/local/src/ tar xf cni-plugins-amd64-v0.7.1.tgz -C /opt/kubernetes/bin/cni/ scp -r /opt/kubernetes/bin/cni/* 192.168.113.12:/opt/kubernetes/bin/cni/ scp -r /opt/kubernetes/bin/cni/* 192.168.113.13:/opt/kubernetes/bin/cni/

9.6 创建ETCD key

etcdctl --ca-file /opt/kubernetes/ssl/ca.pem --cert-file /opt/kubernetes/ssl/flanneld.pem --key-file /opt/kubernetes/ssl/flanneld-key.pem \

--no-sync -C https://192.168.113.11:2379,https://192.168.113.12:2379,https://192.168.113.13:2379 \

mk /kubernetes/network/config '{ "Network": "10.2.0.0/16", "Backend": { "Type": "vxlan", "VNI": 1 }}'9.6 启动flannel并查看状态

chmod +x /opt/kubernetes/bin/* systemctl start flannel

9.7 配置docker使用flannel

vim /usr/lib/systemd/system/docker.service [Unit] Description=Docker Application Container Engine Documentation=https://docs.docker.com After=network-online.target firewalld.service flannel.service Wants=network-online.target Requires=flannel.service [Service] Type=notify EnvironmentFile=-/run/flannel/docker ExecStart=/usr/bin/dockerd $DOCKER_OPTS ExecReload=/bin/kill -s HUP $MAINPID LimitNOFILE=infinity LimitNPROC=infinity LimitCORE=infinity TimeoutStartSec=0 Delegate=yes KillMode=process Restart=on-failure StartLimitBurst=3 StartLimitInterval=60s [Install] WantedBy=multi-user.target

9.8 重启doker

systemctl daemon-reload systemctl restart docker

9.9 创建一个deployment 并测试网络连通性

#kubectl run net-test --image=alpine --replicas=2 sleep 360000 [root@linux-node1 bin]# kubectl get pod -o wide NAME READY STATUS RESTARTS AGE IP NODE net-test-5767cb94df-4qbct 1/1 Running 0 16s 10.2.41.2 192.168.113.13 net-test-5767cb94df-r22l8 1/1 Running 0 16s 10.2.99.2 192.168.113.12 [root@linux-node1 bin]# ping 10.2.41.2 PING 10.2.41.2 (10.2.41.2) 56(84) bytes of data. 64 bytes from 10.2.41.2: icmp_seq=1 ttl=63 time=0.806 ms $ kubectl run nginx --image=nginx --replicas=1 $ kubectl expose deployment nginx --port=88 --target-port=80 --type=NodePort [root@linux-node2 ~]# curl -I 10.1.52.33:88 HTTP/1.1 200 OK Server: nginx/1.15.0 Date: Thu, 28 Jun 2018 08:08:32 GMT Content-Type: text/html Content-Length: 612 Last-Modified: Tue, 05 Jun 2018 12:00:18 GMT Connection: keep-alive ETag: "5b167b52-264" Accept-Ranges: bytes

十、插件

10.1 CoreDNS

[root@linux-node2 CoreDNS]# cat coredns.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: coredns

namespace: kube-system

labels:

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

kubernetes.io/bootstrapping: rbac-defaults

addonmanager.kubernetes.io/mode: Reconcile

name: system:coredns

rules:

- apiGroups:

- ""

resources:

- endpoints

- services

- pods

- namespaces

verbs:

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

addonmanager.kubernetes.io/mode: EnsureExists

name: system:coredns

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:coredns

subjects:

- kind: ServiceAccount

name: coredns

namespace: kube-system

---

apiVersion: v1

kind: ConfigMap

metadata:

name: coredns

namespace: kube-system

labels:

addonmanager.kubernetes.io/mode: EnsureExists

data:

Corefile: |

.:53 {

errors

health

kubernetes cluster.local. in-addr.arpa ip6.arpa {

pods insecure

upstream

fallthrough in-addr.arpa ip6.arpa

}

prometheus :9153

proxy . /etc/resolv.conf

cache 30

}

---

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: coredns

namespace: kube-system

labels:

k8s-app: coredns

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

kubernetes.io/name: "CoreDNS"

spec:

replicas: 2

strategy:

type: RollingUpdate

rollingUpdate:

maxUnavailable: 1

selector:

matchLabels:

k8s-app: coredns

template:

metadata:

labels:

k8s-app: coredns

spec:

serviceAccountName: coredns

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

- key: "CriticalAddonsOnly"

operator: "Exists"

containers:

- name: coredns

image: coredns/coredns:1.0.6

imagePullPolicy: IfNotPresent

resources:

limits:

memory: 170Mi

requests:

cpu: 100m

memory: 70Mi

args: [ "-conf", "/etc/coredns/Corefile" ]

volumeMounts:

- name: config-volume

mountPath: /etc/coredns

ports:

- containerPort: 53

name: dns

protocol: UDP

- containerPort: 53

name: dns-tcp

protocol: TCP

livenessProbe:

httpGet:

path: /health

port: 8080

scheme: HTTP

initialDelaySeconds: 60

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 5

dnsPolicy: Default

volumes:

- name: config-volume

configMap:

name: coredns

items:

- key: Corefile

path: Corefile

---

apiVersion: v1

kind: Service

metadata:

name: coredns

namespace: kube-system

labels:

k8s-app: coredns

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

kubernetes.io/name: "CoreDNS"

spec:

selector:

k8s-app: coredns

clusterIP: 10.1.0.2

ports:

- name: dns

port: 53

protocol: UDP

- name: dns-tcp

port: 53

protocol: TCP

$ kubectl create -f .

$ kubectl get deployment -n kube-system

NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE

coredns 2 2 2 2 19m

$ kubectl get service -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

coredns ClusterIP 10.1.0.2 <none> 53/UDP,53/TCP 19m

[root@linux-node1 CoreDNS]# kubectl run dns-test1 --rm -it --image=alpine /bin/sh

If you don't see a command prompt, try pressing enter.

/ # ping baidu.com

PING baidu.com (220.181.57.216): 56 data bytes

64 bytes from 220.181.57.216: seq=0 ttl=127 time=42.444 ms

^C

--- baidu.com ping statistics ---

1 packets transmitted, 1 packets received, 0% packet loss

round-trip min/avg/max = 42.444/42.444/42.444 ms

/ # nslookup nginx

nslookup: can't resolve '(null)': Name does not resolve

Name: nginx

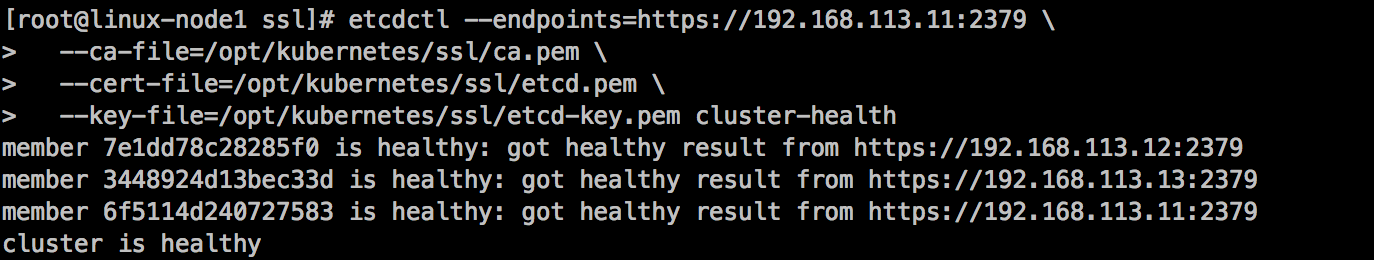

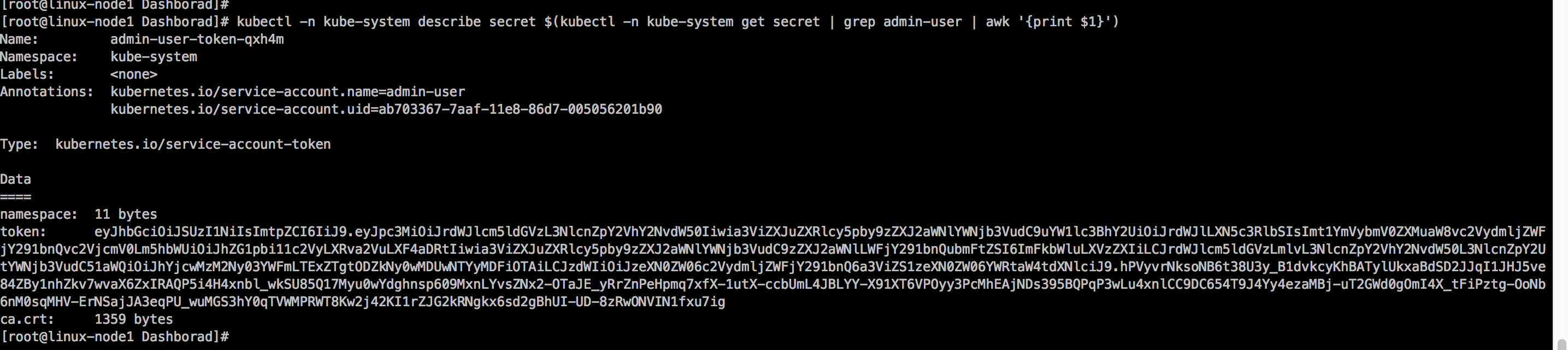

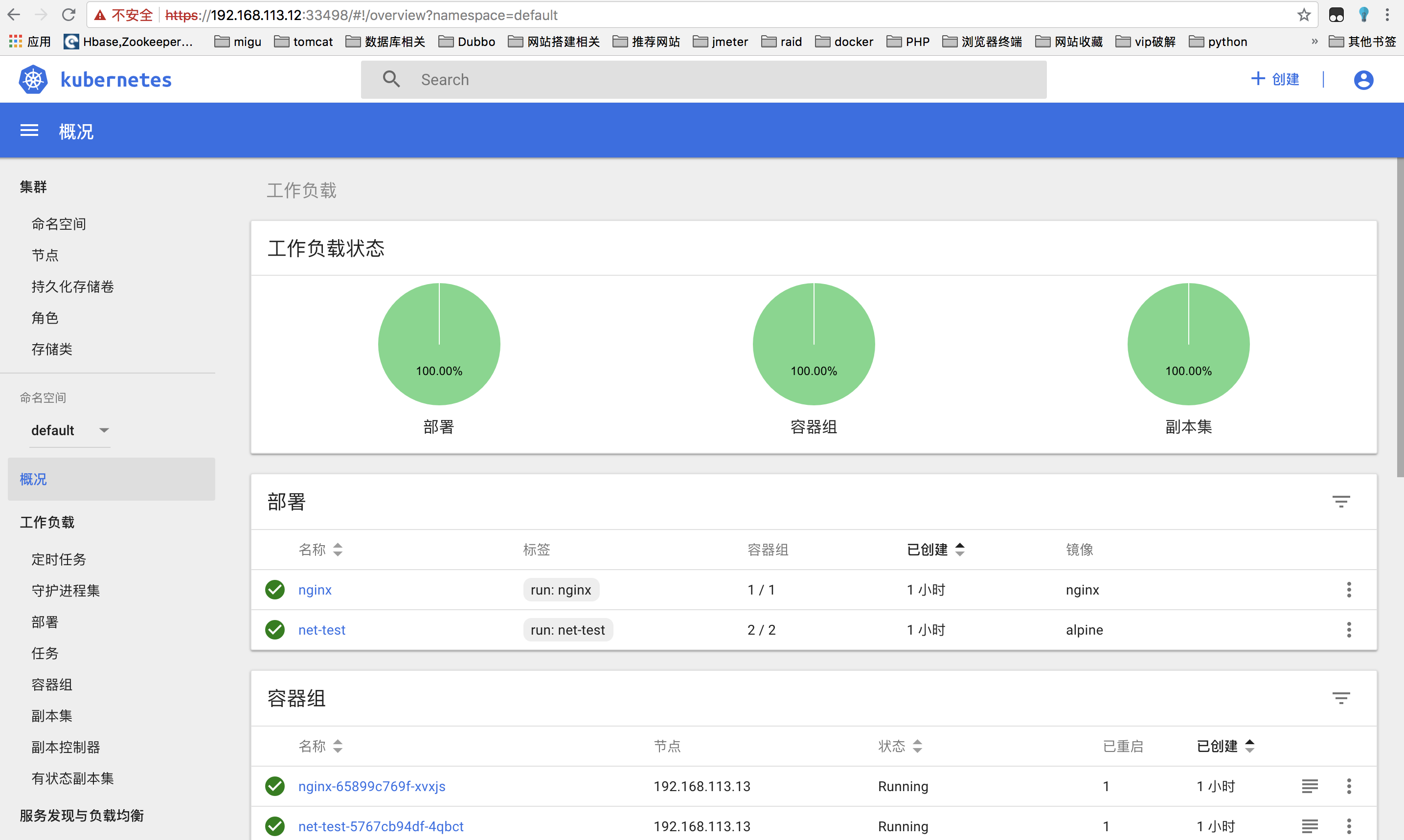

Address 1: 10.1.52.33 nginx.default.svc.cluster.local10.2 dashborad UI

下载yaml并上传:https://pan.baidu.com/s/1b_u-GB4WAIetsSG8Sh-nDQ

#创建 kubectl create -f .

需要等待几分钟,第一次安装需要下载镜像

访问:https://192.168.113.12:33498/

选择获取令牌:

$ kubectl -n kube-system describe secret $(kubectl -n kube-system get secret | grep admin-user | awk '{print $1}')

输入token登陆

神回复

发表评论:

◎欢迎参与讨论,请在这里发表您的看法、交流您的观点。